Solving new problems

We often manage to use unfamiliar gadgets or solve a new maths problem correctly on the first try. We can draw on what we already know. This ability reflects our capacity for deductive reasoning and for flexibly transferring prior knowledge to new situations. The neural mechanisms that enable this kind of adaptive, intelligent behaviour remain a profound open question for neuroscience and cognitive psychology.

Dr Saurabh Vyas, based at Carnegie Mellon University, is addressing this challenge. His lab is developing sophisticated behavioural tasks that enable them to interpret neural activity during unique, one-off cognitive events, when a subject sees, does, or figures out something for the very first time. As a winner of the Emerging Neuroscientists Seminar Series 2025, Dr Vyas recently spoke at SWC. In this Q&A, he introduces his work and shares his excitement about the new capabilities of neuroscience.

What got you interested in the question of how the brain solves problems?

During my postgraduate studies, the questions that kept coming back to me were about our ability to think and reason. Our ability to seemingly figure things out, even though we had never seen anything like that problem before. I guess, as an aside, I also grew up reading a lot of Sherlock Holmes. Despite it being fiction, there is some truth to our abilities to take evidence about a bunch of different topics and use that in order to solve a new problem. I find that so fascinating.

I spent a lot of my PhD coming up with approaches that try to get at that, but the technology wasn't there. We didn't have the ability to record enough neurons to make sense of it. I didn't know how you could ever train animals to do any task that resembled what Sherlock Holmes does. Even if we somehow succeeded, we just didn't have the right conceptual framework.

But we’ve made a lot of progress, not just me, but others in the field as well, over the last five to seven years, along those fronts.

During my postdoc, I thought, if I'm going to do this, let's just go all in. I'm going to design a task that, while it isn't exactly what Sherlock Holmes does, has many of the features.

Now, for the first time, we have a task where we actually get an animal to do something new. Give him knowledge and then have him deploy that knowledge.

We did a lot of science and engineering, and I just fell in love with that endeavour. I'm starting my own group now, and we're going to keep pushing in that direction.

What changed that made you think you could finally tackle this problem?

I think there's both a conceptual answer and a technological one.

The technological answer is that, let's say you could get a subject into a position where they were truly thinking about a problem, you need to observe enough of the brain to be able to make sense of it. Not just record more neurons from one area, but also record from many different areas. A lot of what I did during my postdoc was develop technology that enabled that. As a graduate student, we could get maybe 10 neurons simultaneously, with probes that had 16 channels. And now we're two orders of magnitude above that. It’s changed from 16 to 1600. That just blew the doors open to being able to do things.

And on a conceptual level, if you can trust the neural activity that you are recording, and you can record enough of it, you can just do what psychologists do. Psychologists study behaviour on individual trials. A psychologist will have no problem coming to you and saying, in this exact moment, the person did X. Because look, I can measure his arm movement, I can measure his eye movements, and so on. We're on the cusp of being able to do exactly that with neural activity.

It’s hard for me to convey how exciting that is for neuroscience. We're in a position where we can now study how a subject solves a problem in a way that they may have never done before in the past. By directly examining what the neural activity looks like in a particular moment, we can make sense of things that are often invisible at the level of behaviour.

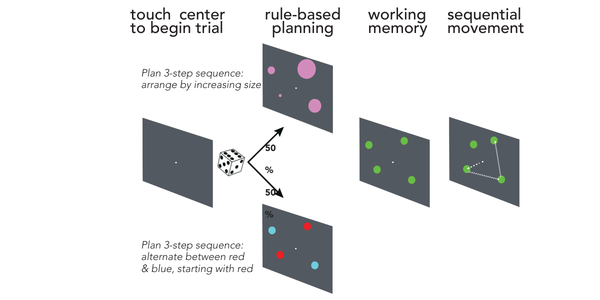

Can you describe the task you designed, and why it requires reasoning rather than simple learning to solve it?

An example I like use is you learn how to multiply numbers in school, and you learn a whole method to do it. And eventually you get really good at it. If I asked you right now, what is 719 times 41, you probably won’t know the answer off the top of your head, but you know the method, and you could eventually come up with that answer.

That's essentially what the task is. The task isn't that I've just memorised all combinations of numbers, but rather, I know the steps. We can watch those steps unfold in the brain in real time.

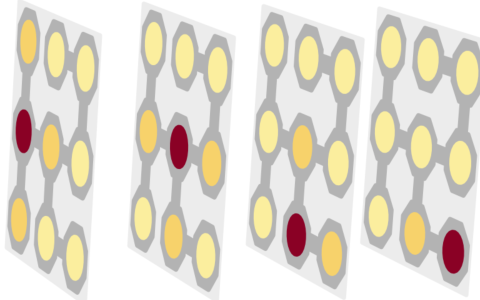

Schematic of the task design. The animal is presented with one of two puzzles to solve. The rules for the puzzles have been learned previously. The puzzle is then masked, and a solution needs to be held in working memory. Following a go-cue, the animal moves to touch the spots and solve the task.

What does that neural activity look like?

In the task that I've developed, the animal has to make three decisions to arrive at the final answer. We can see neural activity that resembles each of those decisions.

In the task, he has to reason, come up with an answer, hold that answer in his head, and then we ask him for the answer.

We’ve verified what we can see, because we can predict what answer he's going to give before he actually gives it.

You saw these reasoning signals in the prefrontal cortex before seeing them in the motor cortex - what does that tell us about how the brain organises complex problem solving?

Critically, the first thing we saw is that not all areas look the same. It seems not all the signals are everywhere. Motor cortex knows nothing about the future, and it knows nothing about the rule that should be used. Said differently, motor cortex is concerned about preparing and generating the immediate next movement. Prefrontal cortex on the other hand knows what you should do, and why you should do it in what way.

The big finding is that knowledge is represented in the prefrontal cortex, and it's organised in such a way that it facilitates flexibility.

So, unlike other tasks, we allowed the animals to choose the order of the decisions, the timing of those decisions, and the strategy by which those decisions were being made. We typically refer to this as ‘cognitive flexibility.’ One of the things that we've discovered is a mechanism by which the prefrontal cortex's organisation facilitates that flexibility.

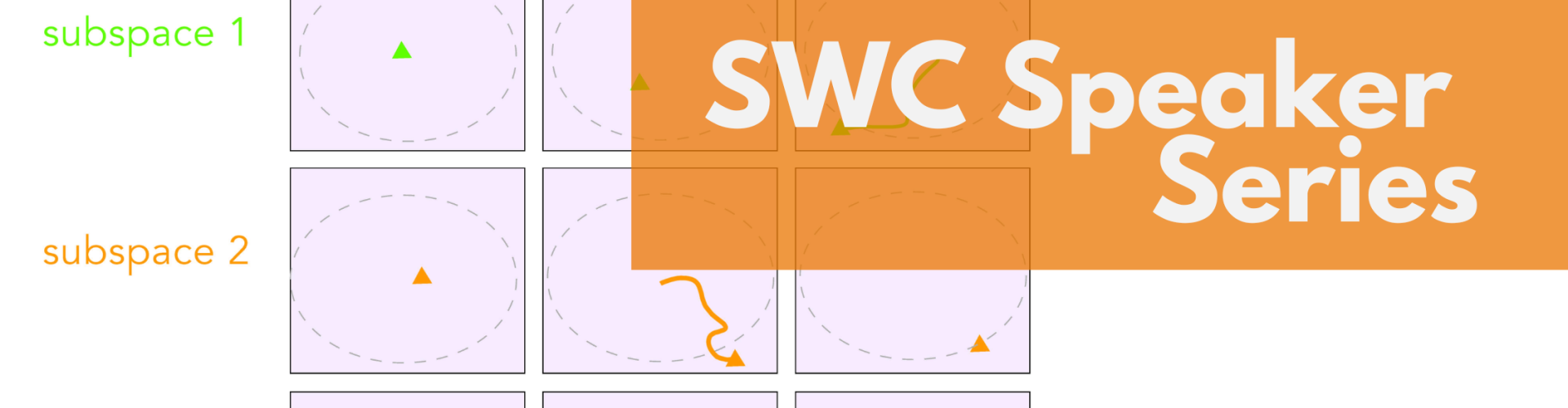

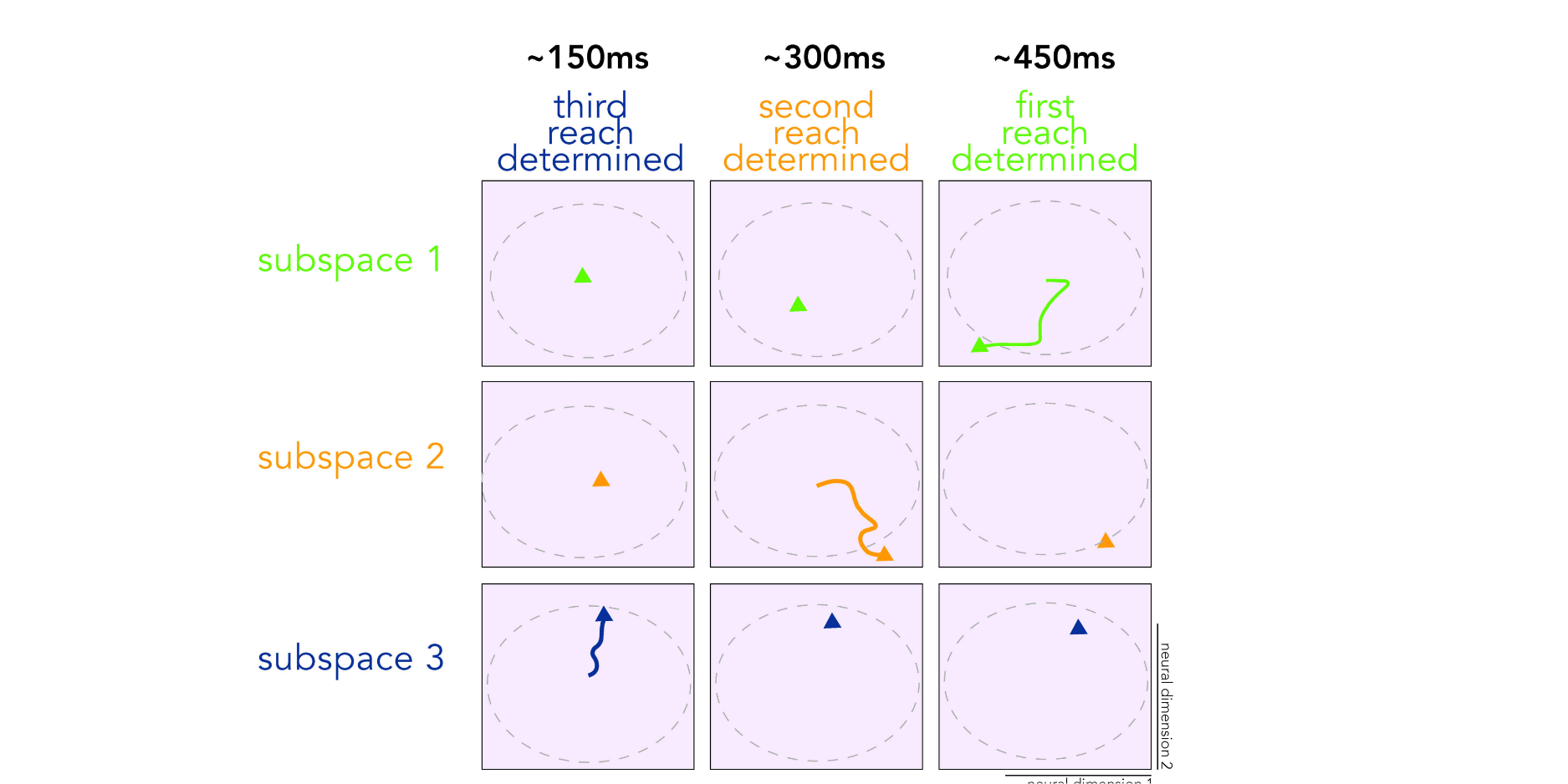

Neural activity projected into the three neural subspaces in the prefrontal cortex, each determining one decision. In this trial, we are watching each of the three decisions that the animal makes unfold in real-time. This example illustrates that cognitive flexibility allows this animals to make a decision about his final action, before making any decisions about his first or second actions.

Were you surprised when you saw the neural data of animals solving these problems in real time?

Yes, I was surprised by the degree to which he could be flexible. I thought I designed the task in which there were three steps. I expected, maybe I even hoped, that you would see; now I'm doing step one, now I'm doing step two, now I'm doing step three, now I'm holding it all in my head, and you're good to go.

Instead, what we saw is sometimes he does that, but he's actually able to do those steps in any order. He doesn't need to make decisions in a stereotyped manner.

In hindsight, we should have expected this result; we asked the animal to do something intelligent, and many intelligent behaviours can be done in a myriad of different ways. And he absolutely does them in a myriad of different ways. Our theories are good enough now that we can actually see all of those on individual trials.

And that's remarkable, and it gets me really excited about how we will use this to study higher-order cognition and logical reasoning.

Do your findings change how you think about what reasoning is?

We've been studying neural computation through a ‘computation-through-dynamics’ lens for a long time.

Its core thesis is that, embedded within the responses of single neurons, there exists a smaller set of ‘computationally meaningful’ signals. By identifying and studying these signals, we can form rigorous hypotheses about the underlying computational processes of the circuit.

One of the things that's so striking is that this framework has allowed us to discover these highly labile and flexible subspaces. These signals are computationally meaningful and give us enormous insight into what the brain is actually trying to do. We’ve seen this across many diverse tasks and brain areas. We are now trying to understand how these subspaces might allow the brain to do something that you've never done before.

What's the next big question that your work opens up?

I'm particularly interested in studying the more general phenomena of abstraction. This idea by which you take knowledge and you represent it in such a manner that you can reuse it in new situations. Because really, that's what makes you intelligent.

Biography

Saurabh Vyas completed undergraduate training in Biomedical Engineering and Electrical Engineering at Johns Hopkins University in 2012. While working on his MSE in Biomedical Engineering, Saurabh was a research engineer at the Applied Physics Laboratory. Saurabh completed his PhD in Bioengineering at Stanford University in 2020, where he was advised by Prof. Krishna Shenoy. His research was recognized with a NSF Graduate Research Fellowship, and a NIH NINDS NRSA (F31) fellowship. In 2021, Saurabh's thesis was awarded the Donald B. Lindsley Prize by the Society for Neuroscience, which "recognizes a young neuroscientist's outstanding PhD thesis in the general area of behavioral (i.e., systems) neuroscience." Saurabh completed a postdoctoral fellowship at Columbia University in 2025, where he was co-advised by Profs. Mark Churchland and Michael Shadlen. Saurabh's work was recognized by both an NIH NINDS Ruth L. Kirschstein National Research Service Award (F32), and a K99/R00 Pathway to Independence Award. In January 2026, Saurabh joined the Neuroscience Institute at Carnegie Mellon University, where he leads the Laboratory of General Intelligence and Computation (LOGIC).