Mouse-controlled mouse helps researchers understand intentional control

Study sheds light on how the brain represents causally-controlled objects

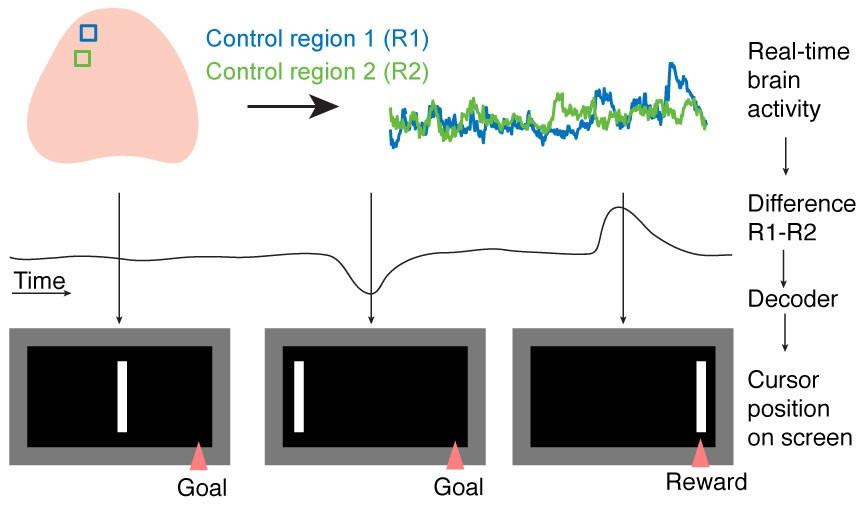

We know that the brain can direct thoughts, but how this is achieved is difficult to determine. Researchers at the Sainsbury Wellcome Centre have devised a brain machine interface (BMI) that allows mice to learn to guide a cursor using only their brain activity. By monitoring this mouse-controlled mouse moving to a target location to receive a reward, the researchers were able to study how the brain represents intentional control.

The study, published today in Neuron, sheds light on how the brain represents causally-controlled objects. The researchers found that when mice were controlling the cursor, brain activity in the higher visual cortex was goal-directed and contained information about the animal’s intention. This research could one day help to improve BMI design.

Top panel, from top to bottom: Run, licking and brain activity during training. Animals had to increase activity in control region 1 (R1, blue) compared to control region 2 (R2, green) to bring a visual cursor to a target to get reward (bottommost trace is activity difference R1-R2). Cyan lines indicate trial starts, pink line indicates success, black line indicates end of a failure trial. Middle panel: reconstruction of cursor location. Animal had to bring the cursor to position 8 on screen for 300 ms to get reward. Bottom panel: Video of brain activity, with location of R1 (blue box) and R2 (green box) indicated.

“Brain machine interfaces are devices that allow a person or animal to control a computer with their mind. In humans, that could be controlling a robotic arm to pick up a cup of water, or moving a cursor on a computer to type a message using the mind. In animals, we are using these devices as models for understanding how to make BMIs better,” said the paper’s first author, Dr Kelly Clancy, who completed the study at the Sainsbury Wellcome Centre, University College London, following previous work at Biozentrum, University of Basel.

“Right now, BMIs tend to be difficult for humans to use and it takes a long time to learn how to control a robotic arm for example. Once we understand the neural circuits supporting how intentional control is learned, which this work is starting to elucidate, we will hopefully be able to make it easier for people to use BMIs,” said co-author of the paper, Professor Tom Mrsic-Flogel, Director of the Sainsbury Wellcome Centre, University College London.

Traditionally it has been difficult to study how causally-controlled objects are represented in the brain. Imagine trying to determine how the brain represents a cursor it is controlling versus a cursor it is passively watching. There are motor signals in the first case but not in the second, so it is difficult to compare the two. With BMIs, the subject doesn’t physically move, so a cleaner comparison can be made.

In this study, the researchers used a technique called widefield brain imaging, which allowed them to look at the whole dorsal surface of the cortex while the animal was using the BMI. This technique enabled an unbiased screen of the cortex to locate the areas that were involved in learning to intentionally control the cursor.

Brain activity in two control regions was read out in real time. The difference in activity was transformed by the decoder into the position of a visual cursor on the screen. If the animal managed to differentially activate the regions in the correct manner, the cursor would travel to the target location at the edge of the screen and the animal would receive a soyamilk reward.

Visual cortical areas in mice were found to be involved during the task. These areas included the parietal cortex, an area of the brain implicated in intention in humans.

“Researchers have been studying the parietal cortex in humans for a long time. However, we weren’t necessarily expecting this area to pop out in our unbiased screen of the mouse brain. There seems to be something special about parietal cortex as it sits between sensory and motor areas in the brain and may act as a way station between them,” added Dr Kelly Clancy.

By delving deeper into how this way station works, the researchers hope to understand more about how control is exerted by the brain. In this study, mice learned to map their brain activity to sensory feedback. This is analogous to how we learn to interact with the world—for example, we adjust how we use a computer mouse depending on its gain setting. Our brains build representations of how objects typically behave, and execute actions accordingly. By understanding more about how such rules are generated and updated in the brain, the researchers hope to be able to improve BMIs.

This research was supported by the European Research Council, Swiss National Science Foundation, Gatsby Charitable Foundation, Wellcome, EMBO Long-term Fellowship, HFSP Postdoctoral Fellowship, and the Branco Weiss-Society in Science grant.

Source:

Read the full paper in Neuron: ‘The sensory representations of causally controlled objects’

Contact:

April Cashin-Garbutt

Head of Research Communications and Engagement

Sainsbury Wellcome Centre

a.cashin-garbutt@ucl.ac.uk